Credit Lending

By: Matt Sifferlen On January 17th, we celebrated the 308th birthday of one of America's most famous founding fathers, Ben Franklin. I've been a lifelong fan of his after reading his biography while in middle school, and each year when his birthday rolls around I'm inspired to research him a bit more since there is always something new to learn about his many meaningful contributions to this great nation. I find Ben a true inspiration for his capacity for knowledge, investigation, innovation, and of course for his many witty and memorable quotes. I think Ben would have been an exceptional blogger back in his day, raising the bar even higher for Seth Godin (one of my personal favorites) and other uber bloggers of today. And as a product manager, I highly respect Ben's lifelong devotion to improving society by finding practical solutions to complex problems. Upon a closer examination of many of Ben's quotes, I now feel that Ben was also a pioneer in providing useful lessons in commercial fraud prevention. Below is just a small sampling of what I mean. “An ounce of prevention is worth a pound of cure” - Preventing commercial fraud before it happens is the key to saving your organization's profits and reputation from harmful damage. If you're focused on detecting fraud after the fact, you've already lost. “By failing to prepare, you are preparing to fail.” - Despite the high costs associated with commercial fraud losses, many organizations don't have a process in place to prevent it. This is primarily due to the fact that commercial fraud happens at a much lower frequency than consumer fraud. Are you one of those businesses that thinks "it'll never happen to me?" “When the well’s dry, we know the worth of water.” - So you didn't follow the advice of the first two quotes, and now you're feeling the pain and embarrassment that accompanies commercial fraud. Have you learned your lesson yet? “After crosses and losses, men grow humbler and wiser.” Ah, no lender likes losses. Nothing like a little scar tissue from "bad deals" related to fraud to remind you of decisions and processes that need to be improved in order to avoid history repeating itself. “Honesty is the best policy.” - Lots of businesses stumble on this part, failing to communicate when they've been compromised by fraud or failing to describe the true scope of the damage. Be honest (quickly!) and set expectations about what you're doing to limit the damage and prevent similar instances in the future. “Life’s tragedy is that we get old too soon and wise too late.” - Being too late is a big concern when it comes to fraud prevention. It's impossible to prevent 100% of all fraud, but that shouldn't stop you from making sure that you have adequate preventive processes in place at your organization. “Never leave that till tomorrow which you can do today.” - Get a plan together now to deal with fraud scenarios that your business might be exposed to. Data breaches, online fraud and identity theft rates are higher than they've ever been. Shame on those businesses that aren't getting prepared now. “Beer is living proof that God loves us and wants us to be happy.” - I highly doubt Ben actually said this, but some Internet sites attribute it to him. If you already follow all of his advice above, then maybe you can reward yourself with a nice pale ale of your choice! So Ben can not only be considered the "First American," but he can also be considered one of the first fraud prevention visionaries. Guess we'll need to add one more thing to his long list of accomplishments!

By: Teri Tassara In my blog last month, I covered the importance of using quality credit attributes to gain greater accuracy in risk models. Credit attributes are also powerful in strengthening the decision process by providing granular views on consumers based on unique behavior characteristics. Effective uses include segmentation, overlay to scores and policy definition – across the entire customer lifecycle, from prospecting to collections and recovery. Overlay to scores – Credit attributes can be used to effectively segment generic scores to arrive at refined “Yes” or “No” decisions. In essence, this is customization without the added time and expense of custom model development. By overlaying attributes to scores, you can further segment the scored population to achieve appreciable lift over and above the use of a score alone. Segmentation – Once you made your “Yes” or “No” decision based on a specific score or within a score range, credit attributes can be used to tailor your final decision based on the “who”, “what” and “why”. For instance, you have two consumers with the same score. Credit attributes will tell you that Consumer A has a total credit limit of $25K and a BTL of 8%; Consumer B has a total credit limit of $15K, but a BTL of 25%. This insight will allow you to determine the best offer for each consumer. Policy definition - Policy rules can be applied first to get the desirable universe. For example, an auto lender may have a strict policy against giving credit to anyone with a repossession in the past, regardless of the consumer’s current risk score. High quality attributes can play a significant role in the overall decision making process, and its expansive usage across the customer lifecycle adds greater flexibility which translates to faster speed to market. In today’s dynamic market, credit attributes that are continuously aligned with market trends and purposed across various analytical are essential to delivering better decisions.

In the 1970s, it took an average of 18 days before a decision could be made on a credit card application. Credit decisioning has come a long way since then, and today, we have the ability to make decisions faster than it takes to ring up a customer in person at the point of sale. Enabling real-time credit decisions helps retail and online merchants lay a platform for customer loyalty while incentivizing an increased customer basket size. While the benefits are clear, customers still are required to be at predetermined endpoints, such as: At the receiving end of a prescreened credit offer in the mail At a merchant point of sale applying for retail credit In front of a personal computer The trends clearly show that customers are moving away from these predetermined touch-points where they are finding mailed credit offers antiquated, spending even less time at a retail point of sale versus preferring to shop online and exchanging personal computers for tablets and smartphones. Despite remaining under 6 percent of retail spending, e-commerce sales for Q2 2013 have reportedly been up 18.5 percent from Q2 2012, representing the largest year-over-year increase since Q4 2007, before the 2008 financial crisis. Fueled by a shift from personal computers to connected devices and a continuing growth in maturity of e-commerce and m-commerce platforms, this trend is only expected to grow stronger in the future. To reflect this shift, marketers need to be asking themselves how they should apportion their budgets and energies to digital while executing broader marketing strategies that also may include traditional channels. Generally, traditional card acquisitions methods have failed to respond to these behavioral shifts, and, as a whole, retail banking was unprepared to handle the disintermediation of traditional products in favor of the convenience mobile offers. Now that the world of banking is finding its feet in the mobile space, accessibility to credit must also adapt to be on the customer’s terms, unencumbered by historical notions around customer and credit risk. Download this white paper to learn how credit and retail private-label issuers can provide an optimal customer experience in emerging channels such as mobile without sacrificing risk mitigation strategies — leading to increased conversions and satisfied customers. It will demonstrate strategies employed by credit and retail private-label issuers who already have made the shift from paper and point of sale to digital, and it provides recommendations that can be used as a business case and/or a road map.

By: Zach Smith On September 13, the Consumer Financial Protection Bureau (CFPB) announced final amendments to the mortgage rules that it issued earlier this year. The CFPB first issued the final mortgage rules in January 2013 and then released subsequent amendments in June. The final amendments also make some additional clarifications and revisions in response to concerns raised by stakeholders. The final modifications announced by the CFPB in September include: Amending the prohibition on certain servicing activities during the first 120 days of a delinquency to allow the delivery of certain notices required under state law that may provide beneficial information about legal aid, counseling, or other resources. Detailing the procedures that servicers should follow when they fail to identify or inform a borrower about missing information from loss mitigation applications, as well as revisions to simplify the offer of short-term forbearance plans to borrowers suffering temporary hardships. Clarifying best practices for informing borrowers about the address for error resolution documents. Exempting all small creditors, including those not operating predominantly in rural or underserved areas, from the ban on high-cost mortgages featuring balloon payments. This exemption will continue for the next two years while the CFPB re-examines the definitions of “rural” and “underserved.” Explaining the "financing” of credit insurance premiums to make clear that premiums are considered to be “financed” when a lender allows payments to be deferred past the month in which it’s due. Clarifying the circumstances when a bank’s teller or other administrative staff is considered to be a “loan originator” and the instances when manufactured housing employees may be classified as an originator under the rules. Clarifying and revising the definition of points and fees for purposes of the qualified mortgage cap on points and fees and the high-cost mortgage points and fees threshold. Revising effective dates of many loan originator compensation rules from January 10, 2014 to January 1, 2014. While the industry continues to advocate for an extension of the effective date to provide additional time to implement the necessary compliance requirements, the CFPB insists that both lenders and mortgage servicers have had ample time to comply with the rules. Most recently, in testimony before the House Financial Services Committee, CFPB Director Richard Cordray stated that “most of the institutions have told us that they will be in compliance” and he didn’t foresee further delays. Related Research Experian's Global Consulting Practice released a recent white paper, CCAR: Getting to the Real Objective, that suggests how banks, reviewers and examiners can best actively manage CCAR's objectives with a clear dual strategy that includes both short-term and longer-term goals for stress-testing, modeling and system improvements. Download the paper to understand how CCAR is not a redundant set of regulatory compliance exercices; its effects on risk management include some demanding paradigm shifts from traditional approaches. The paper also reviews the macroeconomic facts around the Great Recession revealing some useful insights for bank extreme-risk scenario development, econometric modeling and stress simulations. Related Posts Where Business Models Worked, and Didn't, and Are Most Needed Now in Mortgages Now That the CFPB Has Arrived, What's First on It's Agenda Can the CFPB Bring Debt Collection Laws into the 21st Centrury

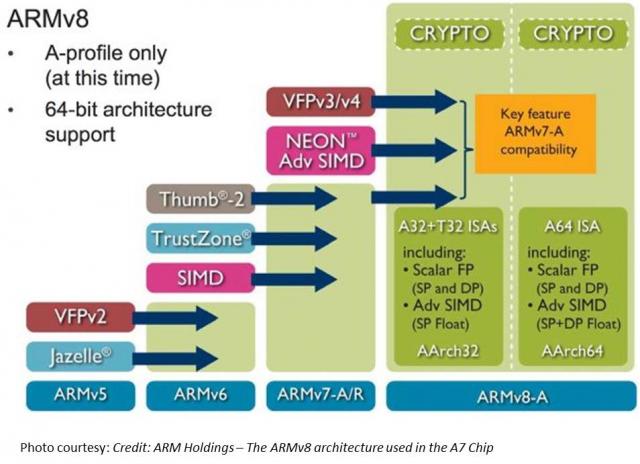

TL;DR Read within as to how Touch ID is made possible via ARM’s TrustZone/TEE, and why this matters in the context of the coming Apple’s identity framework. Also I explain why primary/co-processor combos are here to stay. I believe that eventually, Touch ID has a payments angle – but focusing on e-commerce before retail. Carriers will weep over a lost opportunity while through Touch ID, we have front row seats to Apple’s enterprise strategy, its payment strategy and beyond all – the future direction of its computing platform. I had shared my take on a possible Apple Biometric solution during the Jan of this year based on its Authentec acquisition. I came pretty close, except for the suggestion that NFC is likely to be included. (Sigh.) Its a bit early to play fast and loose with Apple predictions, but its Authentec acquisition should rear its head sometime in the near future (2013 – considering Apple’s manufacturing lead times), that a biometric solution packaged neatly with an NFC chip and secure element could address three factors that has held back customer adoption of biometrics: Ubiquity of readers, Issues around secure local storage and retrieval of biometric data, Standardization in accessing and communicating said data. An on-chip secure solution to store biometric data – in the phone’s secure element can address qualms around a central database of biometric data open to all sorts of malicious attacks. Standard methods to store and retrieve credentials stored in the SE will apply here as well. Why didn’t Apple open up Touch ID to third party dev? Apple expects a short bumpy climb ahead for Touch ID before it stabilizes, as early users begin to use it. By keeping its use limited to authenticating to the device, and to iTunes – it can tightly control the potential issues as they arise. If Touch ID launched with third party apps and were buggy, it’s likely that customers will be confused where to report issues and who to blame. That’s not to say that it won’t open up Touch ID outside of Apple. I believe it will provide fettered access based on the type of app and the type of action that follows user authentication. Banking, Payment, Productivity, Social sharing and Shopping apps should come first. Your fart apps? Probably never. Apple could also allow users to set their preferences (for app categories, based on user’s current location etc.) such that biometrics is how one authenticates for transactions with risk vs not requiring it. If you are at home and buying an app for a buck – don’t ask to authenticate. But if you were initiating a money transfer – then you would. Even better – pair biometrics with your pin for better security. Chip and Pin? So passé. Digital Signatures, iPads and the DRM 2.0: It won’t be long before an iPad shows up in the wild sporting Touch ID. And with Blackberry’s much awaited and celebrated demise in the enterprise, Apple will be waiting on the sidelines – now with capabilities that allow digital signatures to become ubiquitous and simple – on email, contracts or anything worth putting a signature on. Apple has already made its iWork productivity apps(Pages, Numbers, Keynote), iMovie and iPhoto free for new iOS devices activated w/ iOS7. Apple, with a core fan base that includes photographers, designers and other creative types, can now further enable iPads and iPhones to become content creation devices, with the ability to attribute any digital content back to its creator by a set of biometric keys. Imagine a new way to digitally create and sign content, to freely share, without worrying about attribution. Further Apple’s existing DRM frameworks are strengthened with the ability to tag digital content that you download with your own set of biometric keys. Forget disallowing sharing content – Apple now has a way to create a secondary marketplace for its customers to resell or loan digital content, and drive incremental revenue for itself and content owners. Conclaves blowing smoke: In a day and age where we forego the device for storing credentials – whether it be due to convenience or ease of implementation – Apple opted for an on-device answer for where to store user’s biometric keys. There is a reason why it opted to do so – other than the obvious brouhaha that would have resulted if it chose to store these keys on the cloud. Keys inside the device. Signed content on the cloud. Best of both worlds. Biometric keys need to be held locally, so that authentication requires no roundtrip and therefore imposes no latency. Apple would have chosen local storage (ARM’s SecurCore) as a matter of customer experience, and what would happen if the customer was out-of-pocket with no internet access. There is also the obvious question that a centralized biometric keystore will be on the crosshairs of every malicious entity. By decentralizing it, Apple made it infinitely more difficult to scale an attack or potential vulnerability. More than the A7, the trojan in Apple’s announcement was the M7 chip – referred to as the motion co-processor. I believe the M7 chip does more than just measuring motion data. M7 – A security co-processor? I am positing that Apple is using ARM’s TrustZone foundation and it may be using the A7 or the new M7 co-processor for storing these keys and handling the secure backend processing required. Horace Dediu of Asymco had called to question why Apple had opted for M7 and suggested that it may have a yet un-stated use. I believe M7 is not just a motion co-processor, it is also a security co-processor. I am guessing M7 is based on the Cortex-M series processors and offloads much of this secure backend logic from the primary A7 processor and it may be that the keys themselves are likely to be stored here on M7. The Cortex-M4 chip has capabilities that sound very similar to what Apple announced around M7 – such as very low power chip, that is built to integrate sensor output and wake up only when something interesting happens. We should know soon. This type of combo – splitting functions to be offloaded to different cores, allows each cores to focus on the function that it’s supposed to performed. I suspect Android will not be far behind in its adoption, where each core focuses on one or more specific layers of the Android software stack. Back at Google I/O 2013, it had announced 3 new APIs (the Fused location provider) that enables location tracking without the traditional heavy battery consumption. Looks to me that Android decoupled it so that we will see processor cores that focus on these functions specifically – soon. I am fairly confident that Apple has opted for ARM’s Trustzone/TEE. Implementation details of the Trustzone are proprietary and therefore not public. Apple could have made revisions to the A7 chip spec and could have co-opted its own. But using the Trustzone/TEE and SecurCore allows Apple to adopt existing standards around accessing and communicating biometric data. Apple is fully aware of the need to mature iOS as a trusted enterprise computing platform – to address the lack of low-end x86 devices that has a hardware security platform tech. And this is a significant step towards that future. What does Touch ID mean to Payments? Apple plans for Touch ID kicks off with iTunes purchase authorizations. Beyond that, as iTunes continue to grow in to a media store behemoth – Touch ID has the potential to drive fraud risk down for Apple – and to further allow it to drive down risk as it batches up payment transactions to reduce interchange exposure. It’s quite likely that à la Walmart, Apple has negotiated rate reductions – but now they can assume more risk on the front-end because they are able to vouch for the authenticity of these transactions. As they say – customer can longer claim the fifth on those late-night weekend drunken purchase binges. Along with payment aggregation, or via iTunes gift cards – Apple has now another mechanism to reduce its interchange and risk exposure. Now – imagine if Apple were to extend this capability beyond iTunes purchases – and allow app developers to process in-app purchases of physical goods or real-world experiences through iTunes in return for better blended rates? (instead of Paypal’s 4% + $0.30). Heck, Apple can opt for short-term lending if they are able to effectively answer the question of identity – as they can with Touch ID. It’s Paypal’s ‘Bill Me Later’ on steroids. Effectively, a company like Apple who has seriously toyed with the idea of a Software-SIM and a “real-time wireless provider marketplace” where carriers bid against each other to provide you voice, messaging and data access for the day – and your phone picks the most optimal carrier, how far is that notion from picking the cheapest rate across networks for funneling your payment transactions? Based on the level of authentication provided or other known attributes – such as merchant type, location, fraud risk, customer payment history – iTunes can select across a variety of payment options to pick the one that is optimal for the app developer and for itself. And finally, who had the most to lose with Apple’s Touch ID? Carriers. I wrote about this before as well, here’s what I wrote then (edited for brevity): Does it mean that Carriers have no meaningful role to play in commerce? Au contraire. They do. But its around fraud and authentication. Its around Identity. … But they seem to be stuck imitating Google in figuring out a play at the front end of the purchase funnel, to become a consumer brand(Isis). The last thing they want to do is leave it to Apple to figure out the “Identity management” question, which the latter seems best equipped to answer by way of scale, the control it exerts in the ecosystem, its vertical integration strategy that allows it to fold in biometrics meaningfully in to its lineup, and to start with its own services to offer customer value. So there had to have been much ‘weeping and moaning and gnashing of the teeth’ on the Carrier fronts with this launch. Carriers have been so focused on carving out a place in payments, that they lost track of what’s important – that once you have solved authentication, payments is nothing but accounting. I didn’t say that. Ross Anderson of Kansas City Fed did. What about NFC? I don’t have a bloody clue. Maybe iPhone6? iPhone This is a re-post from Cherian's original blog post "Smoke is rising from Apple's Conclave"

By: Matt Sifferlen I recently read interesting articles on the Knowledge@Wharton and CNNMoney sites covering the land grab that's taking place among financial services startups that are trying to use a consumer's social media activity and data to make lending decisions. Each of these companies are looking at ways to take the mountains of social media data that sites such as Twitter, Facebook, and LinkedIn generate in order to create new and improved algorithms that will help lenders target potential creditworthy individuals. What are they looking at specifically? Some criteria could be: History of typing in ALL CAPS or all lower case letters Frequent usage of inappropriate comments Number of senior level connections on LinkedIn The quantity of posts containing cats or annoying self-portraits (aka "selfies") Okay, I made that last one up. The point is that these companies are scouring through the data that individuals are creating on social sites and trying to find useful ways to slice and dice it in order to evaluate and target consumers better. On the consumer banking side of the house, there are benefits for tracking down individuals for marketing and collections purposes. A simple search could yield a person's Facebook, Twitter, or LinkedIn profile. The behaviorial information can then be leveraged as a part of more targeted multi-channel and contact strategies. On the commercial banking side, utilizing social site info can help to supplement any traditional underwriting practices. Reviewing the history of a company's reviews on Yelp or Angie's List could share some insight into how a business is perceived and reveal whether there is any meaningful trend in the level of negative feedback being posted or potential growth outlook of the company. There are some challenges involved with leveraging social media data for these purposes. 1. Easily manipulated information 2. Irrelevant information that doesn't represent actual likes, thoughts or relevant behaviors 3. Regulations From a Fraud perspective, most online information can easily and frequently be manipulated which can create a constantly moving target for these providers to monitor and link to the right customer. Fake Facebook and Twitter pages, false connections and referrals on LinkedIn, and fabricated positive online reviews of a business can all be accomplished in a matter of minutes. And commercial fraudsters are likely creating false business social media accounts today for shelf company fraud schemes that they plan on hatching months or years down the road. As B2B review websites continue to make it easier to get customers signed up to use their services, the downside is there will be even more unusable information being created since there are less and less hurdles for commercial fraudsters to clear, particularly for sites that offer their services for free. For now, the larger lenders are more likely to utilize alternative data sources that are third party validated, like rent and utility payment histories, while continuing to rely on tools that can prevent against fraud schemes. It will be interesting to see what new credit and non credit data will be utilized as a common practice in the future as lenders continue their efforts to find more useful data to power their credit and marketing decisions.

By: Joel Pruis As we go through the economic seasons, we need to remember to reassess our strategy. While we use data as the way to accurately assess the environment and determine the best course of action for your future strategy, the one thing that is for certain is that the current environment will definitely change. Aspects that we did not anticipate will develop, trends may start to slow or change direction. Moneyball continues to be a movie that gives us some great examples. We see that Billy Beane and Peter Brand were constantly looking at their position and making adjustments to the team’s roster. Even before they made any significant adjustments, Beane and Brand found themselves justifying their strategy to the owner (even though the primary issue was with the head coach not playing the roster that maximized the team’s probability of winning). The first aspect that worked against the strategy was the head coach and while we could go down a tangent about cultural battles within an organization, let's focus on how Beane adjusted. Beane simply traded the players the head coach preferred to play forcing the use of players preferred by Beane and Brand. Later we see Beane and Brand making final adjustments to the roster by negotiating trades resulting in the Oakland A’s landing Ricardo Rincon. The change in the league that allowed such a trade was that Rincon’s team was not doing well and the timing allowed the A’s to execute the trade. Beane adjusted with the changes in the league. One thing to note, is that he changed the roster while the team was doing well. They were winning but Beane made adjustments to continue maximizing the team’s potential. Too often we adjust when things are going poorly and do not adjust when we seem to be hitting our targets. Overall, we need to continually assess what has changed in our environment and determine what new challenges or new opportunities these changes present. I encourage you to regularly assess what is happening in your local economy. High-level national trends are constantly on the front page of the news but we need to drill down to see what is happening in a specific market area being served. As Billy Beane did with the Oakland A’s throughout the season, I challenge you to assess your current strategies and execution against what is happening in your market territory. Related posts: How Financial Institutions can assess the overall conditions for generating the net yield on the assets How to create decision strategies for small business lending Upcoming Webinar: Learn about the current state of small business, the economy and how it applies to you

If you're looking to implement and deploy a knowledge-based authentication (KBA) solution in your application process for your online and mobile customer acquisition channels - then, I have good news for you! Here’s some of the upside you’ll see right away: Revenues (remember, the primary activities of your business?) will accelerate up Your B2C acceptance or approval rates will go up thru automation Manual review of customer applications will go down and that translates to a reduction in your business operation costs Products will be sold and shipped faster if you’re in the retail business, so you can recognize the sales revenue or net sales quicker Your customers will appreciate the fact that they can do business in minutes vs. going thru a lengthy application approval process with turnaround times of days to weeks And last but not least, your losses due to fraud will go down To keep you informed about what’s relevant when choosing a KBA vendor, here’s what separates the good KBA providers from the bad: The underlying data used to create questions should be from multiple data sources and should vary in the type of data, for example credit and non-credit Relying on public record data sources is becoming a risky proposition given recent adoption of various social media websites and various public record websites Have technology that will allow you to create a custom KBA setup that is unique to your business and business customers, and the proven support structure to help you grow your business safely Provide consulting (performance monitoring)and analytical support that will keep you ahead of the fraudsters trying to game your online environment by assuring your KBA tool is performing at optimal levels Solutions that can easily interface with multiple systems, and assist from a customer experience perspective. How are your peers in the following 3 industries doing at adopting a KBA strategy to help grow and protect their businesses? E-commerce 21% use KBA today and are satisfied with the results* 13% have KBA on roadmap and the list is growing fast* Healthcare 20% use dynamic KBA* Financial Institutions 30% combination of dynamic & static KBA* 20% dynamic KBA* What are the typical uses of KBA?* Call center Web / mobile verification Enrollment ID verification Provider authentication Eligibility *According to a 2012 report on knowledge-based authentication by Aite Group LLC Knowledge-based authentication, commonly referred to as KBA, is a method of authentication which seeks to prove the identity of someone accessing a service, such as a website. As the name suggests, KBA requires the knowledge of personal information of the individual to grant access to the protected material. There are two types of KBA: "static KBA", which is based on a pre-agreed set of "shared secrets"; and "dynamic KBA", which is based on questions generated from a wider base of personal information.

There are two core fundamentals of evaluating loan loss performance to consider when generating organic portfolio growth through the setting of customer lending limits. Neither of which can be discussed without first considering what defines a “customer.” Definition of a customer The approach used to define a customer is critical for successful customer management and is directly correlated to how joint accounts are managed. Definitions may vary by how joint accounts are allocated and used in risk evaluation. It is important to acknowledge: Legal restrictions for data usage related to joint account holders throughout the relationship Impact on predictive model performance and reporting where there are two financially linked individuals with differently assigned exposures Complexities of multiple relationships with customers within the same household – consumer and small business Typical customer definitions used by financial services organizations: Checking account holders: This definition groups together accounts that are “fed” by the same checking account. If an individual holds two checking accounts, then she will be treated as two different and unique customers. Physical persons: Joint accounts allocated to each individual. If Mr. Jones has sole accounts and holds joint accounts with Ms. Smith who also has sole accounts, the joint accounts would be allocated to both Mr. Jones and Ms. Smith. Consistent entities: If Mr Jones has sole accounts and holds joint accounts with Ms. Smith who also has sole accounts, then 3 “customers” are defined: Jones, Jones & Smith, Smith. Financially-linked individuals: Whereas consistent entities are considered three separate customers, financially-linked individuals would be considered one customer: “Mr. Jones & Ms. Smith”. When multiple and complex relationships exist, taking a pragmatic approach to define your customers as financially-linked will lead to a better evaluation of predicted loan performance. Evaluation of credit and default risk Most financial institutions calculate a loan default probability on a periodic basis (monthly) for existing loans, in the format of either a custom behavior score or a generic risk score, supplied by a credit bureau. For new loan requests, financial institutions often calculate an application risk score, sometimes used in conjunction with a credit bureau score, often in a matrix-based decision. This approach is challenging for new credit requests where the presence and nature of the existing relationship is not factored into the decision. In most cases, customers with existing relationships are treated in an identical manner to those new applicants with no relationship – the power and value of the organization’s internal data goes overlooked whereby customer satisfaction and profits suffer as a result. One way to overcome this challenge is to use a Strength of Relationship (SOR) indicator. Strength of Relationship (SOR) indicator The Strength of Relationship (SOR) indicator is a single-digit value used to define the nature of the relationship of the customer with financial institution. Traditional approaches for the assignment of a SOR are based upon the following factors Existence of a primary banking relationship (salary deposits) Number of transactional products held (DDA, credit cards) Volume of transactions Number of loan products held Length of time with bank The SOR has a critical role in the calculation of customer level risk grades and strategies and is used to point us to the data that will be the most predictive for each customer. Typically the stronger the relationship, the more we know about our customer, and the more robust will be predictive models of consumer behavior. The more information we have on our customer, the more our models will lean towards internal data as the primary source. For weaker relationships, internal data may not be robust enough alone to be used to calculate customer level limits and there will be a greater dependency to augment internal data with external third party data (credit bureau attributes.) As such, the SOR can be used as a tool to select the type and frequency of external data purchase. Customer Risk Grade (CRG) A customer-level risk grade or behavior score is a periodic (monthly) statistical assessment of the default risk of an existing customer. This probability uses the assumption that past performance is the best possible indicator of future performance. The predictive model is calibrated to provide the probability (or odds) that an individual will incur a “default” on one or more of their accounts. The customer risk grade requires a common definition of a customer across the enterprise. This is required to establish a methodology for treating joint accounts. A unique customer reference number is assigned to those customers defined as “financially-linked individuals”. Account behavior is aggregated on a monthly basis and this information is subsequently combined with information from savings accounts and third party sources to formulate our customer view. Using historical customer information, the behavior score can accurately differentiate between good and bad credit risk individuals. The behavior score is often translated into a Customer Risk Grade (CRG). The purpose of the CRG is to simplify the behavior score for operational purposes making it easier for noncredit/ risk individuals to interpret a grade more easily than a mathematical probability. Different methods for evaluating credit risk will yield different results and an important aspect in the setting of customer exposure thresholds is the ability to perform analytical tests of different strategies in a controlled environment. In my next post, I’ll dive deeper into adaptive control, champion challenger techniques and strategy design fundamentals. Related content: White paper: Improving decisions across the Customer Life Cycle

By: Joel Pruis So we know we need to determine the overall net yield on assets required to cover the cost of funds and the operating expenses but how? In the movie Moneyball, the Oakland A’s develop a strategy to win 99 games by scoring 814 runs and only allowing 645 runs by the opposition. In order to generate the necessary runs, Peter Brand boils down all the stats into one number, on base percentage. By looking at the on-base percentage of all the players in the league, Brand is able to determine the likelihood of generating runs. There are a few key phrases/quotes from this scene that need to be highlighted: “it’s about getting things down to one number” “People are overlooked for a variety of biased reasons and ‘perceived’ flaws.” “Bill James and mathematics cut straight through that [biased reasons and perceived flaws].” Getting things down to one number is the liberating element for the Oakland A’s and for banking. We have already identified the one number for banking – Net Yield on Assets. Let’s define this a bit further though. For this exercise, net yield means the gross yield (interest income plus fee income) on assets less charge offs. We are looking to see what is going to be the consistent return on the assets less what can be expected net charge off related to the assets. When Billy Beane and Peter Brand got it down to the one number “On Base Percentage” it altered the player selection process and highlighted the biases of the scouts such as: Giambi’s brother was “getting a little thick around the waist” “Old Man” Justice Justice will be “lucky if he hits his weight” in July and August Justice’s “legs are gone Hatteberg “can’t throw” Hatteberg’s “best part of his career is over” Hatteberg “walks a lot” None of the above comments used any facts or data to disprove each player’s on base percentage. Can you imagine if they were underwriters or lenders? What type of compliance issues would we have on our hands with the above comments? Biased against disabilities (Hatteberg with nerve damage); Age Discrimination (“Old Man” Justice), Physical Appearance (Giambi’s brother “getting a little thick around the waist”), these scouts would be a compliance liability let alone obstacles in any type of organizational change. But one can readily see how focusing on one number liberates the thinking and removes the old constraints or ways of thinking. One of the scouts commented that Hatteberg had a high on base percentage because he walks a lot, considering a walk as a negative while a hit is a positive but why? Why is getting on base by being walked a negative but getting on base with a hit is positive? The result is the same as the movie points out. How about in commercial lending? If we focus on net yield on the portfolio as the one number, does that do anything to remove biases? I believe that it does. One example is the perception of charge offs in a portfolio. To this day the notion of a charge off in a commercial portfolio, even in the small business portfolio, is frowned upon and can jeopardize one’s career. Similar to the walk, the charge off is not desired but if we focus on the one number, net yield, it actually removes the stigma of the charge off! If we need at minimum a 6% net asset yield and we are able to generate a gross yield of 9% with an expected loss rate of 2%, we actually exceed our “one number” of a targeted net yield of 6% with an expected net yield of 7%. With that change that removes the biases and flawed perception, can we now start to find opportunities that provide us with the ability to step away from the norm; stop competing with the rest; and generate that higher return that is required? What are the potential biases and flawed perceptions that will need to be addressed? “High Risk” Industries? “Undesired” Loan types? Consumer vs. Commercial? Real Estate Secured vs. Unsecured? Loans vs. Treasuries or other earning asset types? But just as in the movie, you need to be prepared for the response you may get from the traditional ‘seasoned’ lenders in your organization. When Billy Beane puts the new strategy into place at the Oakland A’s, the lead scout responds with: “You don’t put a team together with a computer” “Baseball isn’t just numbers, it isn’t science. If it was anybody could do what we do but they can’t.” “They don’t know what we know. They don’t have our experience and they don’t have our intuition.” Ah, just like the traditional baseball scout is the traditional commercial lender with the years of experience, judgment and intuition. I used to be one and used almost word for word the same argument against credit scoring and small business before I truly understood what it was all about. Don’t get me wrong. Experience, judgment and intuition is valuable and necessary. But that type of judgment tends to get into trouble when it stops looking outside for data and only relies on past personal experience to assess the next moves. Experience is always important but it has to continually review, assess and interpret the data. So let’s start looking at the different types of data. On deck – How do we know how many runs the opposition is going to score? The use of external data.

By: Joel Pruis I am going to take some liberties here. Nowhere in the movie Moneyball does Peter Brand tell us how he got to the magic number of winning 99 games to get to the playoffs. My assumption is that given the way that he evaluates the Oakland A’s, he also evaluations the other teams in their conference. Assessing the competitive landscape provides Brand with the estimated runs their opponents will generate. Now we could take the approach that such analysis would correlate to assessing how your competition is going to perform but I am going to take a different approach. I would compare the conference assessment in Moneyball to be similar to an economic forecast/assessment. We need to assess what are the overall conditions in which we must operate that will allow us to generate the net yield on the assets of our financial institution. Some of the things we need to assess to determine what we will be able to generate related to the net yield on assets would be: Gross yield on assets Current interest rate environment (yield on treasuries, federal home loan bank, etc. Interest rate trends (increasing, declining, trends toward fixed rates, variable rates) Industry information General trend of businesses across the nation How are businesses faring? How well are they paying their creditors? Are they relying more or less on credit? Are new businesses being started? Are they succeeding? Are they failing? General trends (same as above) within your financial institution’s market footprint One such source of the industry information is the Small Business Credit Index generated by Experian & Moody’s Analytics. In the recent release of the Small Business Credit Index, small business is indicating stronger from the prior quarter moving from 104.3 to 109. But this is from a national perspective. Depending on your financial institution, it is important to always get an overall view of the economy but more importantly, what is happening in your particular market footprint. Just as the Oakland A’s in Moneyball maintained an overall perspective of Major League Baseball, their focus for success was targeting their specific conference to reach the playoffs. So as we look at information such as the Small Business Credit Index, we are able to see highlights of regional trends (certain states west of the Mississippi are doing better while certain states along the east coast are not) and specific industry trends. From such data we need to drill down into our specific footprint and current portfolio. We need to review such items as: What industry concentrations do we have that are doing well in the economy and how is our portfolio doing compared to the external data? What industries are we not engaging that may provide a good opportunity for our financial institution? What changes are taking place in the general economy that may impact our ability to achieve our expected results? What external factors must we be monitoring that may impact our strategy (such as the impact of Obamacare and how it will impact the hiring for businesses with more than 50 employees?) Just as in Moneyball, Brand continues to monitor the performance of the overall league (and the individual players for future trades), we need to continually monitor the national, state and local economies to determine what adjustments we will need to make to achieve our strategies. So we have assessed the general environment, on to strategies or “How do we win 99 games with a total payroll of $38 million?”

By: Joel Pruis What is it we as bankers are trying to accomplish? If you have been in the industry for 20+ years, this question may sound ridiculous! We do what we do! We are bankers! What do you mean define what are we trying to do? But that is the question, what is it we are trying to do? I am going to propose we boil it down to the basic/fundamental element – Banks aggregate money from various sources and redeploy these funds to earn a return for the shareholders. Ultimately, our objective is to generate an appropriate return for the shareholders Getting back to the movie Moneyball, Billy Beane and Peter Brand define the objective of the Oakland A’s for the season in terms of projecting the number of wins that are needed to assure, with all probability, that the team makes the playoffs (this would be similar to the objective of banking to generate an appropriate return for the shareholders). But Peter Brand quickly moves into very specific targets that are required for the A’s to make it to the playoffs, namely win 99 regular season games. In order to win 99 regular season games, the A’s offense will need to score 814 runs in the season and defensively only allow 645 runs. Plain and simple. Very objective, very measurable and it is all based upon data, data, data. Let’s break this down. Based upon their conference, the teams in their conference along with the overall schedule, Peter Brand projects that 99 wins are necessary to land a spot in the playoffs. No gut check, no darts or crystal ball but rather historical data that when analyzed provides the benchmark of 99 wins to statistically assure the Oakland A’s that they will make the playoffs. So let’s apply this to banking. Our objective is to generate the appropriate return for our shareholders or the old Return on Equity. So, for example, if our targeted return on equity is 20% (making the playoffs) we need to make sure we generate enough net income (99 wins) through producing the necessary gross yield on assets (814 runs generated by the Oakland A’s offense) less the expected charge offs (645 runs allowed by the Oakland A’s defense). For a quick dive into details, our data would provide for a margin of error on the variable to provide for statistical assurance of achieving the objective (Return on Equity). In the movie there is no guaranty that the 814 runs will win the conference but at the same time there is no guaranty that the Oakland A’s opponents will score 645 runs. Never in the movie does the coach, Billy Beane or Peter Brand tell the team, “You only have to score X number of runs this game, don’t score anymore.” Or even crazier, “You are not letting the other team score enough runs, they need to score 645!” No, the strategy is still to generate as many runs as possible while minimizing the number of runs scored by the opposition. Rather it is the review of the total amount of earning assets of the financial institution and the overall credit quality that we must understand and control to determine our ability to generate the net yield on assets required to generate the return on equity that is required. If we assume too much risk in the portfolio in order to generate the required yield it would be similar to having a poor pitching staff projected to allow 10 runs a game requiring the team to produce 11 runs a game in order to win. It just is not realistic. So basically we need to assess at the high level, are we appropriately structured to allow for the generation of enough profit to provide the appropriate return on equity. At this point, we do not need to complicate it any further than that. Now let’s take a look at the constraints. We know we have them in banking, let’s take a look at probably the single biggest constraint imposed on Billy Beane and the Oakland A’s. In the movie, before Billy Beane is even aware of the Moneyball concept, his is given his constraint by the owner. Beane asks for more money to ‘buy players’ and is flat out rejected by the owner. The owner, in fact, cuts Beane off by asking, “is there anything else I can do for you?”. Net result is that the Oakland A’s have $38 million dollars for payroll vs. the New York Yankees at $120 million. Seriously it does not seem fair. How can you attract the needed talent when you cannot pay the type of salary needed to get the necessary players to win a championship? Let’s rephrases this for banking… How can a bank be expected to deploy its assets when such a high rate of return is required? Boiling it down to a specific example, “How can I originate a commercial loan at this rate of interest when the competition is ½ to 1% lower than our rates?” Up next – Why will 99 games get us to the playoffs? How do we assess the environment?

By: Joel Pruis Times are definitely different in the banking world today. Regulations, competition from other areas, specialized lenders, different lending methods resulting in the competitive landscape we have today. One area that is significantly different today, and for the better, is the availability of data. Data from our core accounting systems, data from our loan origination systems, data from the credit bureaus for consumer and for business. You name it, there is likely a data source that at least touches on the area if not provides full coverage. But what are we doing with all this data? How are we using it to improve our business model in the banking environment? Does it even factor into the equation when we are making tactical or strategic decisions affecting our business? Unfortunately, I see too often where business decisions are being made based upon anecdotal evidence and not considering the actual data. Let’s take, for example, Major League Baseball. How much statistics have been gathered on baseball? I remember as a boy keeping the stats while attending a Detroit Tigers game, writing down the line up, what happened when each player was up to bat, strikes, balls, hits, outs, etc. A lot of stats but were they the right stats? How did these stats correlate to whether the team won or lost, does the performance in one game translate into predictable performance of an entire season for a player or a team? Obviously one game does not determine an entire season but how often do we reference a single event as the basis for a strategic decision? How often do we make decisions based upon traditional methods without questioning why? Do we even reference traditional stats when making strategic decisions? Or do we make decisions based upon other factors as the scouts of the Oakland A’s were doing in the movie Moneyball? In one scene of the Movie, Billy Beane, general manager of the A’s, is asking his team of scouts to define the problem they are trying to solve. The responses are all very subjective in nature and only correlate to how to replace “talented” players that were lost due to contract negotiations, etc. Nowhere in this scene do any of the scouts provide any true stats for who they want to pursue to replace the players they just lost. Everything that the scouts are talking about relates to singular assessments of traits that have not been demonstrated to correlate to a team making the playoffs let alone win a single game. The scouts with all of their experience focus on the player’s swing, ability to throw, running speed, etc. At one point the scouts even talk about the appearance of the player’s girlfriends! But what if we changed how we looked at the sport of baseball? What if we modified the stats used to compile a team; determine how much to pay for an individual player? The movie Moneyball highlights this assessment of the conventional stats and their impact or correlation to a team actually winning games and more importantly the overall regular season. Bill James is given the credit in the movie for developing the methodology ultimately used by the Oakland A’s in the movie. This methodology is also referred to as Sabermetrics. In another scene, Peter Brand, explains how baseball is stuck in the old style of thinking. The traditional perspective is to buy ‘players’. In viewing baseball as buying players, the traditional baseball industry has created a model/profile of what is a successful or valuable player. Buy the right talent and then hopefully the team will win. Instead, Brand changes the buy from players to buying wins. Buying wins which require buying runs, in other words, buy enough average runs per game and you should outscore your opponent and win enough games to win your conference. But why does that mean we would have to change the way that we look at the individual players? Doesn’t a high batting average have some correlation to the number of runs scored? Don’t RBI’s (runs batted in) have some level of correlation to runs? I’m sure there is some correlation but as you start to look at the entire team or development of the line up for any give game, do these stats/metrics have the best correlation to lead to greater predictability of a win or more specifically the predictability of a winning season? Similarly, regardless of how we as bankers have made strategic decisions in the past, it is clear that we have to first figure out what it is exactly we are trying to solve, what we are trying to accomplish. We have the buzz words, the traditional responses, the non-specific high level descriptions that ultimately leave us with no specific direction. Ultimately it allows us to just continue the business as usual approach and hope for the best. In the next few upcoming blogs, we will continue to use the movie Moneyball as the back drop for how we need to stir things up, identify exactly what it is we are trying to solve and figure out how to best approach the solution.

By: Maria Moynihan Cybersecurity, identity management and fraud are common and prevalent challenges across both the public sector and private sector. Industries as diverse as credit card issuers, retail banking, telecom service providers and eCommerce merchants are faced with fraud threats ranging from first party fraud, commercial fraud to identity theft. If you think that the problem isn't as bad as it seems, the statistics speak for themselves: Fraud accounts for 19% of the $600 billion to $800 billion in waste in the U.S. healthcare system annually Medical identity theft makes up about 3% of 8.3 million overall victims of identity theft In 2011, there were 431 million adult victims of cybercrime in 24 countries In fiscal year 2012, the IRS’ specialized identity theft unit saw a 78% spike from last year in the number of ID theft cases submitted The public sector can easily apply the same best practices found in the private sector for ID verification, fraud detection and risk mitigation. Here are four sure fire ways to get ahead of the problem: Implement a risk-based authentication process in citizen enrollment and account management programs Include the right depth and breadth of data through public and private sources to best identity proof businesses or citizens Offer real-time identity verification while ensuring security and privacy of information Provide a Knowledge Based Authentication (KBA) software solution that asks applicants approved random questions based on “out-of-wallet” data What fraud protection tactics has your organization implemented? See what industry experts suggest as best practices for fraud protection and stay tuned as I share more on this topic in future posts. You can view past Public Sector blog posts here.

By: Maria Moynihan State and Federal agencies are tasked with overseeing the integration of new Health Insurance Exchanges and with that responsibility, comes the effort of managing information updates, ensuring smooth data transfer, and implementing proper security measures. The migration process for HIEs is no simple undertaking, but with these three easy steps, agencies can plan for a smooth transition: Step 1: Ensure all current contact information is accurate with the aid of a back-end cleansing tool. Back-end tools clean and enhance existing address records and can help agencies to maintain the validity of records over time. Step 2: Duplicate identification is a critical component of any successful database migration - by identifying and removing existing duplicate records, and preventing future creation of duplicates, constituents are prevented from opening multiple cases, thereby reducing the probability for fraud. Step 3: Validate contact data as it is captured. This step is extremely important, especially as information gets captured across multiple touch points and portals. Contact record validation and authentication is a best practice for any database or system gateway. Agencies and those particularly responsible for the successful launches of HIEs are expected to leverage advanced technology, data and sophisticated tools to improve efficiencies, quality of care and patient safety. Without accurate, standard and verified contact information, none of that is possible. Access the full Health Insurance Exchange Toolkit by clicking here.